Software, meet your new user: agents

Reflecting on where we stand in AI, six months into the agentic age

The difference between the models we had in mid-2025 and the models we have now is hard to overstate.

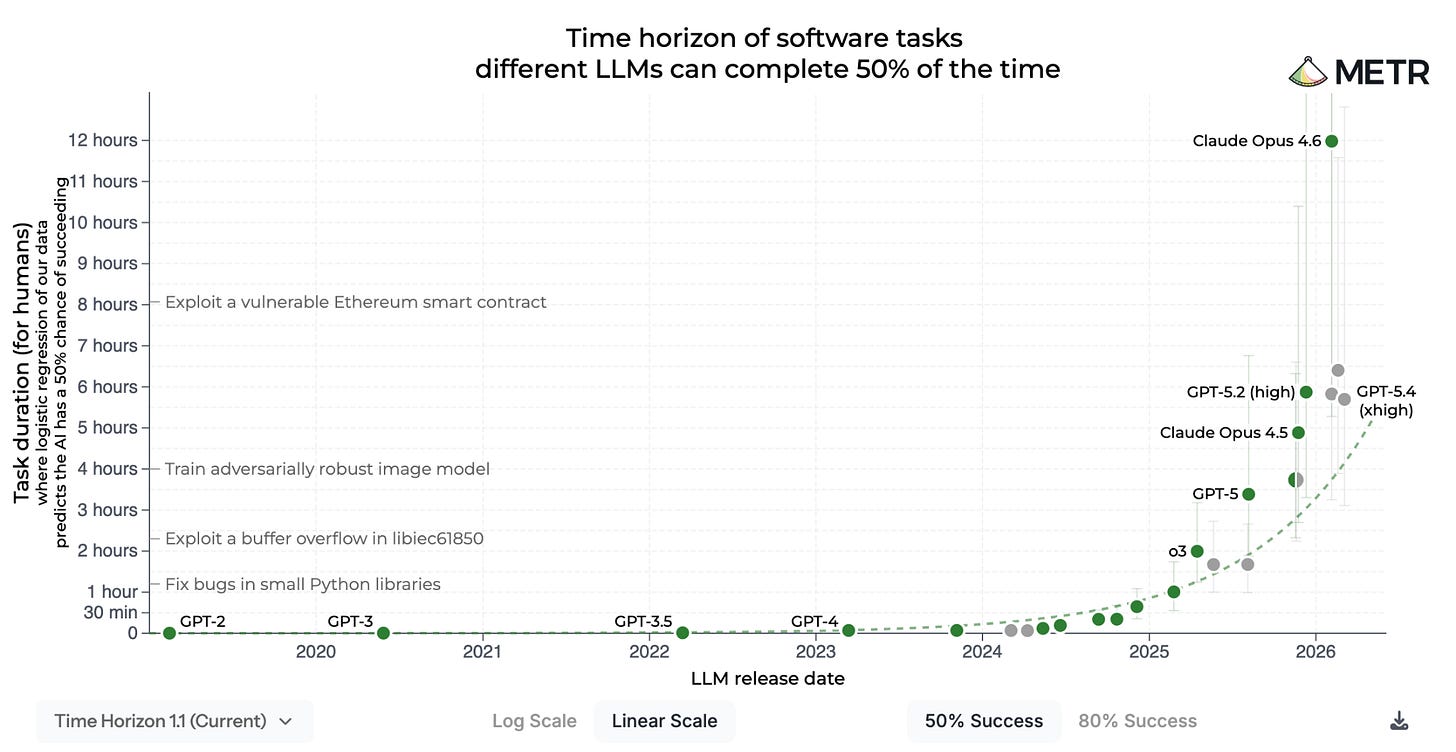

According to METR, the longest task an AI agent can complete autonomously has expanded from 2 hours a year ago to 12 hours today. OpenClaw, an always-on open-source AI assistant, surpassed Linux’s GitHub star count within three months of launch: a milestone that took Linux 15 years. Claude Code, just over a year old, now generates 4% of all public GitHub commits: a figure SemiAnalysis projects will exceed 20% by year’s end.

Long-horizon autonomy is the third major inflection point that we’ve seen in AI in three years: first, the ChatGPT moment, which surfaced the power of pre-training and RLHF; then the o1 moment, which introduced inference-time compute as a second scaling law; and now the long-horizon agent moment, where models can plan, act, recover from failure, and persist until a job is done.

Enterprises are pulling AI into every workflow. At the same time, the gap between what frontier models can achieve and what’s running in production at the average Fortune 500 continues to widen. That gap is the opportunity founders are building into. The pattern mirrors where the PC was in 1991 and the internet in 2000: awareness is near-universal and adoption is exponential, but most of the category-defining products, along with the deeper rewiring of how work gets done, are still ahead.

This was the frame for a fireside chat my partner Jaya hosted last week with two of our founders - Aparna, co-founder and CPO of Arize, and Jonathan, co-founder and CEO of Turing - at our annual investor meeting. Both emphasized how quickly the ground is moving underfoot, and how this reshapes how builders should think about both what they’re building and how.

Here are a few takeaways from the panel that founders should internalize.

1. Build for a future where agents are your primary users

Jonathan’s operating principle at Turing is that most knowledge work should be “agents first, humans second.” Instead of a person leading the work and looping in an AI tool for help, the agent starts the task, and the human verifies, redirects, and supplies judgment where needed.

Aparna sees the same shift is happening outside engineering. At Arize, marketing teams kick off an agent before doing anything themselves. Slack, Salesforce, Datadog, and other internal systems are all connected to Arize’s agents. These tools are becoming agent-facing work environments rather than human-facing systems of record.

The implication is that if your product doesn’t have good APIs, isn’t usable by an agent like Claude, and isn’t legible to the way agents work, it’s still built for the old world. Humans may still click through dashboards, but the highest-leverage users will increasingly be agents.

2. Make the model pluggable

Two years ago, the most advanced strategy in enterprise AI was to fine-tune a small model on proprietary data.

Both Jonathan and Aparna are now firmly in the “no-fine-tuning camp.” In Aparna’s experience, the engineer-hours required to maintain a fine-tune for cost optimization typically exceed whatever savings the fine-tune produces. Her heuristic is that the model should be pluggable: swappable for another frontier model in a day, with the harness around it agnostic to the weights that are running underneath.

“Harness” is a loose, still-evolving term, and different teams use it to mean different things. At its core, it’s the engineering layer between the user and the model that handles routing, memory, verification, and the orchestration of multi-step work. A lot of what gets called the harness is, in practice, conventional software engineering: rules-based decisions you have to make regardless of which model is running underneath.

3. Own the context graph

The context graph, an idea Jaya and I first shared last December, captures what a company’s proprietary asset looks like in an agentic world.

The premise is that a company’s edge isn’t solely its data, but the way that data is navigated and negotiated across systems to make decisions: the judgment calls, the exceptions, and the edge cases that don’t fit neatly into any single system. The context graph captures all of this data - what happened, why, and what the outcome was - and it grows more valuable with every decision the company makes.

Aparna gave an example. Suppose you want to know which users are using your product in a specific way, and how to target more users like them. In the past, that question would require someone from product, marketing, and sales to each pull their own view of the customer, then stitch together a strategy by hand. An agent can increasingly do that stitching itself, but only if it has access to the same underlying systems and context those humans were using.

Most enterprises don’t have this yet. Making that decision-making substrate legible and addressable to an agent is unglamorous organizational work, but it’s increasingly a critical moat.

4. The feedback loop is the product

Jonathan’s read on how much the Fortune 500 has transformed to date because of AI “rounds down to almost zero.” Even at the most sophisticated firms that Turing partners with, the impact on P&L has been limited so far, even as demand from enterprise buyers ontinues to ramp.

The reason, in Aparna’s framing, is not model capability but poor deployment. The companies getting the most value from agents treat them the way they’d treat a new hire, with full onboarding, ongoing feedback, and a manager invested in their performance. They instrument the agent’s work, surface where it falls short, and feed those findings back into the next iteration.

Closing this loop is what makes self-improving software possible. Aparna’s vision for Arize is one where the platform is used primarily by agents that consume their own observability data, identify where they’re failing, write evals, and ship the next iteration. This self-improving loop becomes the product.

Realizing this vision requires deployment infrastructure that enterprises haven’t built yet, including unsolved pieces like agent identity: how agents authenticate, what they can access, what actions they’re allowed to take, and who is accountable when they act.

5. Knowledge work will become an ongoing relationship with agents

Three years from now, Jonathan imagines agents that run for months at a time, perhaps even longer. They’ll call tools, collaborate with other agents, and loop in humans when they need judgment or input. The human role will shift from execution to direction and verification.

If this trajectory is right, the unit of knowledge work changes, from isolated chats into an ongoing relationship between a human and agents.

This creates a new set of product-design questions. How does a person check in with an agent that’s been running for months? What does the inbox look like when someone has 100s of agents working at the same time? How do managers review work that was mostly done by agents? Delegation also takes a different shape when the worker you’re delegating to is always on. All of these are open design and product questions for founders to experiment with and solve.

6. Every founder is now massively leveraged

The conversation closed on what aspects of work AI won’t touch. Aparna pointed to recruiting, maintaining company culture, and enterprise sales: the kind of trust that only gets built when two people are in the same room. Jonathan’s list was similar: sales, recruiting, and other roles where building relationships is the product. All of that stays human. So do the choices that precede it: what to build, who to build it with, what the company stands for.

Everything operationally downstream of those choices is becoming massively leveraged. As Jonathan put it, we’ll all be humans in the loop, just dramatically more leveraged ones, with founders running companies that consist of 100s of agents.

What a company can be, and what a small team can build, are both more open than they’ve been in a generation. This is a moment for founders to be massively ambitious about both.